In early March 2026, Careem ran into serious trouble. Amazon announced that its data centers in the UAE and Bahrain had been hit by drone strikes, with full recovery expected to take a long time — making it the first known case of a major American tech company’s infrastructure being knocked offline by military action. Careem’s engineers pulled off something remarkable: a cross-regional infrastructure migration, completed in a single night. By morning, the lights were back on.

Careem is ‘the everything app’ for the Middle East that bundles ride-hailing, food and grocery delivery, and payments across more than 100 cities in 14 countries, stretching from Morocco to Pakistan. The outage touched millions of people.

The episode is an illustration of a debate playing out in nearly every engineering team today: automation and AI are woven into development workflows, but trusting them in high-stakes environments is a question nobody has fully answered yet.

The 2025 DORA report states that AI in software development primarily amplifies whatever strengths and weaknesses an organization already has, and the impact depends less on the models themselves than on the quality of processes and cloud governance. If a company hasn’t built a solid engineering foundation, AI won’t magically fix problems as they arise — it may simply scale the same mistakes, faster.

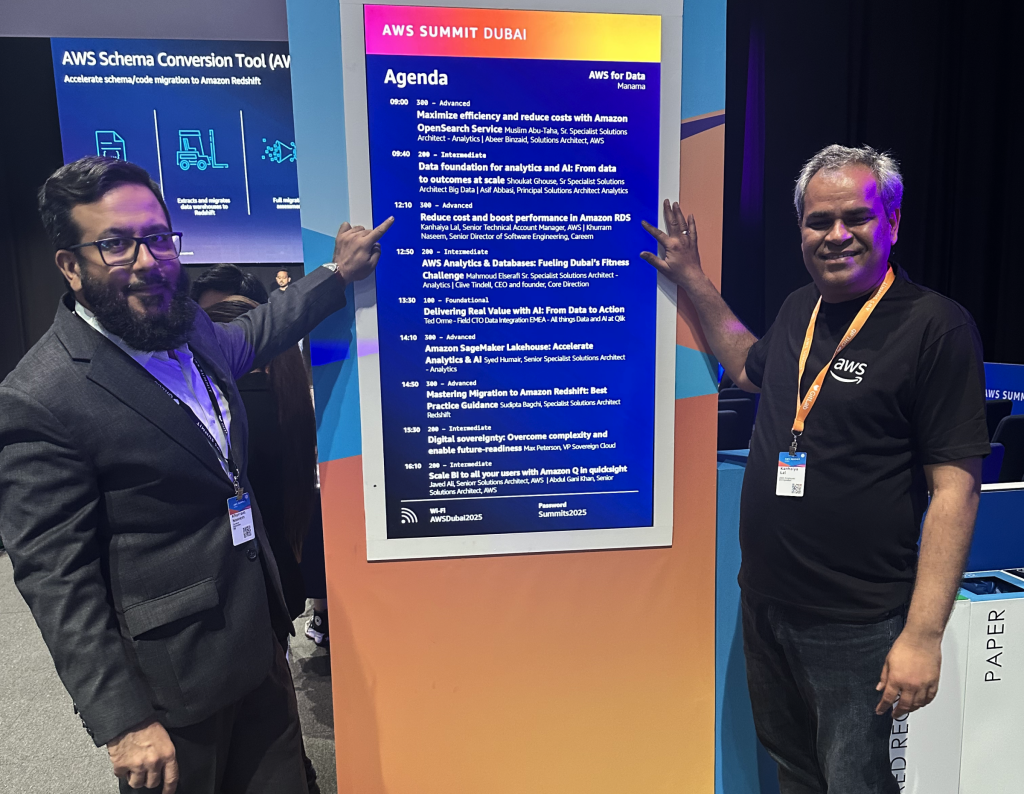

Today we’re speaking with Khurram Naseem, who has been running engineering infrastructure at Careem for over 12 years — about what you can and can’t yet trust AI with in production, why the classic engineering org pyramid is turning into a diamond, and a framework he’s been building in his spare time to keep AI-generated code from becoming a maintenance nightmare.

2Digital: You’ve been building engineering infrastructure at Careem for over 12 years. If we look at any regular Monday morning — what is visually different about how your engineers work today compared to their work a couple of years ago?

Khurram: Evenmore than 12 years, actually. The era we are witnessing in the form of AI advancement is just mind-boggling – the speed is enormous.

Learning, knowing things, automation – you now have full visibility into any kind of operational work in just a minute. You ask a question, and the reports get ready.

Previously, you had to build specific tooling to pull data and do the formatting. That visibility has increased manifold. A few prompts – and the result is on your screen.

2Digital: Your roots are in database architecture – replication, query optimization, deeply manual calls. Where do you already trust AI to take over, and where do you still draw the line?

Khurram: At this stage, we are not relying on AI to make these kinds of changes in production, to be honest. But I am positive it will be happening much sooner – we are already observing it in staging and QA environments.

The thing is, we cannot take that risk now. We operate in 100 cities, across 14 countries. If anything goes south, it will be a huge disaster.

We’ve been learning from some of the tech giants; there was a specific case at Amazon where an AI recommendation caused a disruption. So we are a bit skeptical at the moment. Until we are 100% sure, we will not hand over the production environment to AI.

2Digital: When – or if – you roll out AI across the engineering team, what’s the expected gain?

Khurram: I think a couple of things, for sure. Clarity on what needs to be done, time efficiency, and cost – those are the top high-level things we can achieve.

We are already taking action, specifically on document generation. AI is tightly integrated with our workflow end-to-end. Specifically the code generation – all our software engineers are already getting that assistance. We started with Copilot, moved to Codex from OpenAI, and now we’re running Anthropic tools, with some teams also experimenting with Gemini. The tooling is evolving fast, and we’re still evaluating what’s the best fit – for Careem specifically and for the industry at large. RFC generation, ticket generation – those remain human-in-the-loop for now.

Beyond that, we are working heavily on training our own LLM agent – based on feedback and based on our huge datasets – for customer personalization. So AI is heavily used across every department, actually.

2Digital: AI assistants are trained largely on public code and public data. But Careem operates across 14 countries with regional languages, local regulations, and a very specific customer base. Does that limit what generic AI tooling can actually do for you?

Khurram: On personalization – absolutely yes. Generic models simply don’t know what our customers want. We do. We have years of behavioral data across the region, spanning ride-hailing, food, payments, and more. That proprietary visibility is what lets us build meaningful recommendations – not just “here’s a nearby restaurant” but genuinely useful, contextually tuned suggestions. That’s a competitive edge no off-the-shelf model can replicate.

Beyond personalization, AI is touching other areas too. Our procurement teams are integrating AI with financial data. And one more area in particular – our call center. We are working on replacing a call center agent with an AI agent because there are now a lot of tools and applications available that can handle the kinds of queries coming in and resolve them.

Those are just the headline examples. AI is threading its way through several more departments than most people would expect.

2Digital: Are there any hidden costs to AI adoption that weren’t immediately obvious – things you encountered unexpectedly along the way?

Khurram: There are two areas we keep a close eye on. The first is token cost – we want to make sure it doesn’t spiral out of control. That’s been on our radar from day one.

The second, and more important one, is the cognitive load on our engineers. When you start generating a lot of code, reading and maintaining it can become a nightmare. You have to be very mindful about that.

That’s actually what pushed me to take my own initiative and start building a framework – something that will first help my company, and then we’ll open-source it. That’s my vision. I’ve identified three areas it needs to address.

First, what I call AI bloating – code starts piling up in your repository in a way that’s very hard to maintain.

Second, reviewing those lines of code becomes a real challenge for senior engineers.

And third, how do you reward your teams for working smart?

For example, in agile terms, if a feature is a small story point, it should not result in 500 lines of generated code. I’m giving a hypothetical here, but the point is: if a task is small, the code should reflect that. So I’m building a tool to safeguard against over-generation across all three of those dimensions.

2Digital: You mentioned this was your own initiative. How has your day-to-day changed as director of engineering? Does AI rearrange your priorities?

Khurram: Well, for one – we’ll have more time for lunch. (laughs) But seriously, whatever time we save, there’s no shortage of challenges waiting. We’re revisiting every area, asking: can this be automated? How do we hand it off without losing control?

The bigger shift is in how we think. Looking back at my 25 years in the industry, I can say that, in the past, code was almost entirely deterministic. If condition A is met, do B. Simple. Now, with LLM reasoning capabilities, if condition A is met, you might have options B, C, X, Y, Z – and you have to choose the best one. It’s no longer deterministic; it’s become probabilistic.

But probabilistic thinking doesn’t fit every situation. Sometimes you need a hard, deterministic decision. So the real skill now is judgment – knowing when to let the model reason freely, and when to say: this is the way, just do it. That’s the paradigm shift we’re all navigating.

2Digital: Your company has such a huge data volume accumulated over the years. Do you see AI making mistakes because it lacks that historical context – what was done five or ten years ago?

Khurram: To be honest, we actually avoided an incident here – and it wasn’t because of data gaps. It came down to how the tool interpreted its scope.

This is why, as I keep coming back to in this conversation, you have to be mindful. Review what any AI tool is recommending before you give it the green light to execute. In this case, one of our engineers was working in a staging environment — and the script generated by the AI tool was about to delete a production cluster. Terrifying. God forbid it had gone through – we’d be looking at massive downtime, similar to what happened with Amazon.

That’s exactly why we don’t allow any AI agent to touch production. Not yet. And beyond the near misses, we’re also seeing cases where the recommendations coming from AI agents simply aren’t accurate. We’re watching that closely.

2Digital: AI is involved in flagging higher-level infrastructure calls, like capacity planning, cost, or security?

Khurram: It’s very much involved. We have different agents integrated across different areas: cost, procurement, document generation, RFC generation. It goes well beyond engineering.

In terms of incidents, the only one I’m aware of is the one I already shared – where we asked an agent to clean up a staging environment, and the script it produced was on the verge of deleting a production instance instead. We caught it in time, and the reason nothing happened is simply that we have a hard rule: no AI agent touches production. Full stop.

2Digital: From what you can observe, there don’t seem to be widely adopted frameworks for AI decision-making yet – it largely comes down to the human in the loop. But do you have any specific frameworks implemented on your end?

Khurram: We’ve built in-house tools, and we’re extensively using MCP servers – connecting our LLMs to our internal tooling and AWS services. So an in-house agent can query an AWS service, pass a command, and get it executed. But here’s the key: if an engineer or an AI agent tries to issue a destructive command, like deleting a production instance, it simply won’t go through. We’ve enforced that at the policy level using IAM policies on AWS. It doesn’t matter what instruction is given to the MCP server – the execution is blocked at the core.

That said, a bad recommendation can still come through. An LLM might suggest a cost-saving measure that sounds reasonable but isn’t – and that’s where you need human review before anything gets implemented. A human in the loop is essential.

And honestly, we’re seeing the consequences of skipping that step play out everywhere. There was a case where Meta’s own director of AI safety was testing an agent on her inbox, the tool lost its instructions mid-run and deleted everything before she could stop it. These things are already happening.

So first test in a sandboxed environment, make sure nothing can harm your systems or leak sensitive data, and treat security as a top priority. NVIDIA actually released a framework similar to the open-source agentic tooling out there, but with very tight security guardrails built in – and I think that’s exactly the direction things need to go.

With my 25 years in this industry, I saw this pattern before. Every major shift brings a wave of enthusiasm, then a reckoning. This one is no different.

2Digital: Do you see AI already having an economic effect? Can you measure it, or is it still too early?

Khurram: Absolutely, and the clearest sign is in the shape of organizations themselves. In the past, you had a pyramid: a CTO at the top, then senior VPs, directors, and so on – all the way down to recent graduates. That’s changing considerably. We’re moving toward a diamond shape.

What that means in practice is less reliance on both ends: fewer recent graduates at the bottom and a leaner senior management layer at the top. The bulk of the workforce is shifting toward L9 and L10 engineers (senior, highly specialized roles in big-tech leveling systems – Ed.) – people who deeply understand these technologies and can drive automation themselves. You need very few people to manage them because they know how to work with AI and get things done.

Once your organization reaches that shape, the engineering headcount cost drops considerably. So yes, the economic impact is real, and it’s already happening.

2Digital: If a CTO of a 50-person tech company came to you and said they have a budget to change just one thing around AI this year, where would you point them? Let’s say it’s a SaaS company.

Khurram: Honestly, SaaS is a tough spot right now. According to the Microsoft CEO, that model is going to face a serious challenge – whether it gets fully disrupted or partially, I can’t say, but the pressure will be there.

My recommendation, though, would be to integrate AI directly into your engineering workflow. Tools like Anthropic’s Claude, their coding models – start there. What you get is cleaner code in a fraction of the time, and you ship more features faster than you ever could before. Documentation, code generation, the whole development cycle become more organized. It adds real, measurable value. That’s where I’d put the budget.

2Digital: We’ve covered a lot of ground today. Did we miss anything important?

Khurram: One thing I’d really like people to hear: AI is not as complicated as it looks from the outside. A lot of people assume it’s this deeply technical, hard-to-grasp thing, but it’s not. Anyone – regardless of industry, department, or age – can pick it up quickly. And the beautiful thing is that you can use AI itself to structure your learning. Treat it as your assistant, experiment with it, see what it can do for you personally and for your organization.

I’ve spent 25 years in this industry, and I’m in a senior leadership role, but I still make it a point to keep learning new tools myself. Not just for the organization but, honestly, for my own sanity. You have to stay curious. That’s the one thing I’d recommend to everyone: don’t stop learning on an individual level. That’s where it all starts.