AI has learned to handle letters, numbers, and words brilliantly — it constructs text, interprets commands, writes code, analyzes images. But all of this belongs to a world of symbols, where a mistake usually costs nothing: “rewrite,” “fix it,” “try again.” Physical reality operates differently. Cups fall, surfaces slip, light shifts, things wear out — and a “wrong answer” can mean broken equipment, human injury, and million-dollar losses.

We want to understand how artificial intelligence learns the physical world — not through letters and meanings, but through direct interaction: sensors, motion, contact, environmental resistance. In this conversation with Hleb Dapkiunas, Head of Robotics at Andersen Lab, we try to work out how to measure the progress of physical AI without fooling ourselves with demos, and what understanding the physical world will ultimately give to the intelligence itself. Will this bring us closer to AGI, or will it instead expose the limits of current approaches?

LLMs have intuition. But it’s made entirely of letters and numbers.

2Digital: Let’s start with the basics. What does it mean for AI to learn the physical world? Are we talking about sensors and precise motor control, or about understanding cause and effect — “if I push it, it’ll fall” — at the level of intuition?

Hleb: When it comes to intuition, in some statistical form it already exists even in standard LLM models. After all, they’re essentially trying to predict every following token to construct a text. There’s genuinely something in that process that resembles human intuition.

A major impasse today is hardware. The human body packs an enormous number of receptors into a tiny area. Replicating comparable sensing in a robot — not just in one small zone, but across the entire surface of an artificial skin — is extraordinarily complex and prohibitively expensive.

Beyond that lie skills that remain out of reach for robots, yet go completely unnoticed by people in daily life. Call them persistence and adaptation. If we cut a finger, we instinctively know to avoid sharp edges. We simply know not to grip a glass too hard. If we pick up a cup and find the drink unexpectedly hot, we’ll toss it aside — not onto the person next to us.

For a robot, each of these actions is a non-trivial task, made exponentially harder by the fact that it must execute them in real time, amid constant interference: noise, vibration, wet or slippery surfaces, shifting light, temperature changes — any variable can shift at any moment.

So every millisecond, the robot must register environmental changes, integrate all of that data, recalibrate, and make a decision almost instantaneously. There’s no room for lag. And it must do this dozens — if not hundreds — of times per second.

2Digital: Humans have various senses working simultaneously. Can a modern robot do anything comparable?

Hleb: No, nothing like that exists yet. On top of that, there’s still no unified standard in the market: what those senses should be, how to digitize them in a way that works universally across robots, or how to combine all the resulting data afterward.

When we talk about LLMs, one thing is worth keeping in mind: their understanding of the world is built from data and not from physical experience. Inside the model, that data is represented as tokens and vector embeddings. Even when we feed them images or video, the object being analyzed ultimately gets reduced to vector representations of features. AI has no direct grasp of the physical world — it never felt it, it read a very detailed book about it.

If the book never mentions that nudging a cup can send it off the edge of a table, that idea may simply never occur to the system. So when we start training a robot in the real world, we have to teach it to predict the future: to calculate, dozens or sometimes hundreds of times per second, what will happen if the cup is pushed, squeezed too hard, or flipped upside down while it still has coffee in it. And that’s where a whole set of problems immediately surfaces.

2Digital: What problems come up first?

Hleb: The absence of unified standards significantly slows down training. We can’t take one robot’s knowledge and share it with another — they often speak different languages: different sensors, different data formats, different ways of describing actions.

Then there’s the cost of errors. When an LLM makes a mistake, we simply say: “that’s wrong, do it this way.” That’s it — no harm done. A mistake in physical AI can damage the robot itself, the surrounding equipment, and cost serious money. In scenarios involving humans, it can be simply unacceptable.

This has given rise to two approaches for collecting data relatively safely. The first is human-in-the-loop: a person controls the robot while the machine observes, organizes the information, and builds behavioral patterns from successful attempts. The second is synthetic data — reproducing the robot’s actions in a virtual environment, running simulations, and building datasets there. The catch is that accurately modeling physical reality in a simulation is often harder than collecting data in the real world.

Robots everywhere — not tomorrow. But the pace could accelerate sharply

2Digital: That makes it sound like a future where robots surround us everywhere is still a long way off.

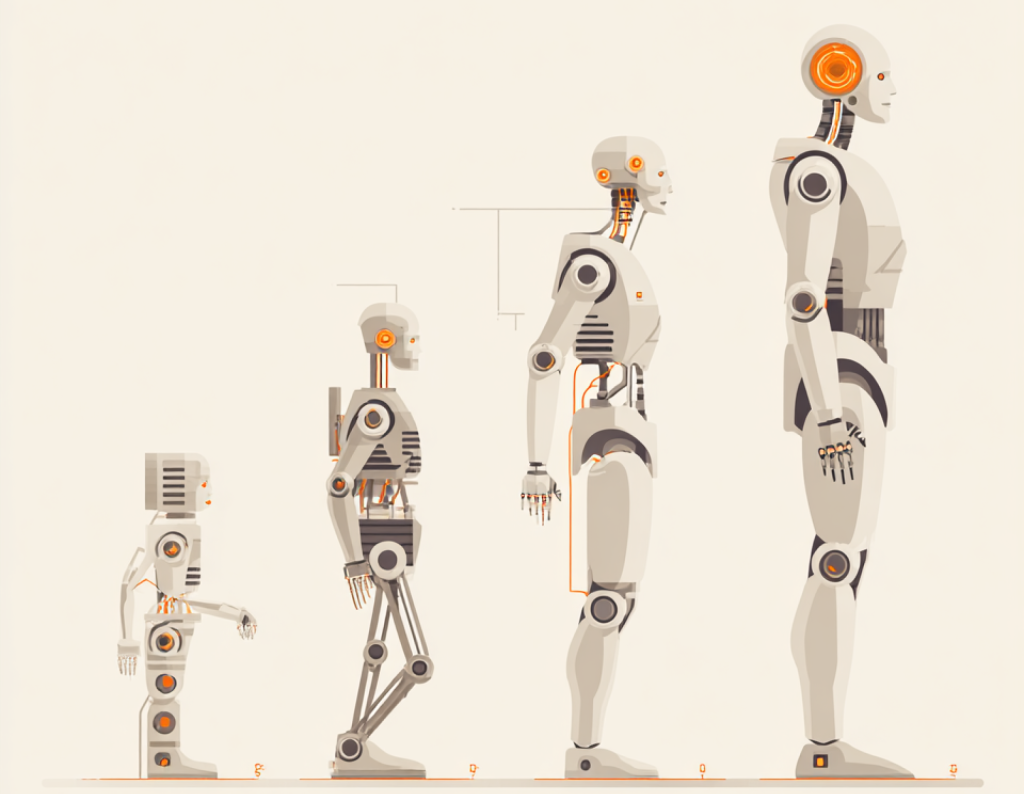

Hleb: At this stage, whatever breakthroughs companies choose to trumpet, there are fundamental problems that will need to be solved first. But remember what the earliest LLMs available to the general public looked like: people called them toys that constantly made mistakes, wrote clumsily, and hallucinated. Today the picture has changed radically — LLMs genuinely replace humans in dozens of tasks.

I think the core problems of physical AI will be resolved within 5 to 10 years — either through universal datasets and standards, or through engineering solutions. And when that happens, the pace of capability growth could shift dramatically.

2Digital: How will information about the physical world affect the “intelligence” of current AI?

Hleb: When we talk about robots, we want them to have grounded data — not just read, but seen, heard, felt, and processed. Why does that matter? Because when knowledge is only described, thinking is constrained by what’s been read. Right now, if nobody wrote that a stone sinks in water, AI simply doesn’t know it — and can’t even venture a guess.

Development is built on encountering something new for the first time and drawing conclusions from it. That’s a different paradigm entirely. We’re talking about a system where a machine enters an unfamiliar room, recognizes that it knows nothing about it, and needs to find something out. Today’s systems, by contrast, are built on a vast body of existing knowledge — and often never venture beyond it.

The physical world will give AI the ability to gather more information about its surroundings — and, interestingly, the ability to learn about itself. What’s distinctive about AI is that it can test new combinations of actions and draw conclusions, sometimes in unexpected ways.

Put it this way: today, very few people go around hugging trees. But if we sent robots to hug every tree on Earth, they might discover something new. Humans are held back by the conviction that it’s pointless — we simply never checked. Someone needs to test strange hypotheses. Robots could become exactly that kind of instrument.

2Digital: Why do most robots today still look so sluggish, even when AI is running them?

Hleb: We touched on this earlier, but there’s an important addition: it’s not just about reaction speed — it’s about multitasking. When a person walks around a car on the road, they’re simultaneously executing a dozen actions and processing dozens of possible scenarios. Robots don’t yet have a reliable enough predictive model to confidently select the optimal response.

There’s another critical gap — robots lack what you might call graceful recovery. Say you ask me a difficult question: I’ll still try to answer, even if I’m not sure. I won’t just go silent. I’ll improvise, steer the conversation elsewhere, crack a joke.

A robot that can’t pick up a cup might simply drop it or freeze abruptly and wait. Why? Because it doesn’t know how to get out of the situation. To continue, it needs to enter a recovery mode: roll back its actions to the last point where it understood what was happening around it — and only from there try again.

The problem is that this kind of behavior is dangerous around people. When a robot fails, it freezes — and you can’t have a lagging machine operating near humans: on a road, in a hospital, at home, in a daycare, in a kitchen.

Then there’s dirty physics: edge cases we’ve never encountered before. Every apartment is unique, every kitchen is different — even the trash cans are different. Text in a book is stable: a finite set of symbols. The physical world is nearly unlimited in its combinations of properties. That’s why physical AI will advance fastest where conditions are more stable and predictable.

Humans get a pass. Robots don’t

2Digital: But humans make mistakes too — we drop things, we don’t react in time. Why aren’t robots given the same margin for error?

Hleb: Exactly. What’s forgivable in a human is unacceptable in a robot. And that’s already a legal and social problem. It only takes one incident where someone gets hurt because of a robot — and quite possibly, a mass reaction follows: “the machine uprising has begun.”

Even autonomous taxis exist only because developers managed to convince the public that statistically, they cause fewer accidents than human drivers. But that trust is extremely fragile.

2Digital: Perhaps a separate oversight layer could govern AI behavior — like a harness on a horse or a muzzle on a dog.

Hleb: Robots operating near people need to be maximally standardized. We can’t allow them to act as they see fit. In the same situation, they must behave consistently — so that no one gets hurt.

That’s why precision and the absence of hallucinations are critical for robots. We still haven’t fully eliminated hallucinations even in LLMs. What’s the problem with a hypothetical oversight layer? We have to be certain it works every single time. In many processes, we simply cannot afford to give a robot a “second opinion.”

Even giving an LLM control of a mouse cursor on a screen is already a risky gamble: such agents sometimes try to reach their goal by any means necessary and attempt to circumvent restrictions. In the physical world, that becomes an unacceptable risk.

One universal robot or many specialized machines?

2Digital: Is a universal robot actually achievable — the way we have general-purpose LLMs? Or is the future a fleet of specialized machines?

Hleb: If you look at the websites of humanoid robotics companies, they typically pursue two directions.

A universal humanoid for all tasks. It may not be the most efficient in narrow scenarios, but if you can manufacture them in the millions, the unit economics become quite attractive and a meaningful share of human labor can be replaced.

A dedicated robot for a specific job. A delivery robot doesn’t need to be a humanoid: the form factor of a robot dog may be more effective. On a factory floor, you often don’t need legs or five fingers — grippers, wheels, and cameras are enough. Sometimes you need a Frankenstein, not a human-shaped machine. And then the question becomes: why use a humanoid at all?

As for a universal home robot that does everything — from washing dishes to walking the dog — I don’t think it will manage reliably within the next ten years. In some scenarios it will simply be too slow, and at a critical moment a human will need to step in.

Frankly, I don’t share the hype around humanoids — it looks like a bubble to me. There’s also the problem that many companies are building robots that look almost identical to each other, as though the question of form has already been settled, even though the tasks and environments vary enormously.

2Digital: How do you measure progress in physical AI without fooling yourself with demos? What metrics are genuinely honest?

Hleb: People often say: if a robot did something once, it means it can do it. That’s not how it works. In robotics, what matters is repeatability, robustness, and variability. There are a few real criteria for success.

First — success rate on long-horizon scenarios. The robot shouldn’t just pick up a cup; it needs to complete a chain: pour the coffee, carry it over, hand it to the visitor.

Second — how many human interventions are required: one a day or a hundred a day. That’s what human-in-the-loop actually means in practice.

Third — the ability to detect an atypical condition, degrade safely (stop, back away, ask for help), and then recover quickly enough to continue — rather than freezing up entirely.

Fourth — cost. A robot can potentially do almost anything today. But if it requires twenty cameras, a hundred lasers, and fifty scanners to do it, the business case collapses.