OpenEvidence’s decision to exit the European market marks one of the first high-profile clashes between American health tech and new EU law. The platform, valued at $1.2 billion, supports US medics in millions of clinical consultations every month and is used by nearly 40 percent of doctors in the country. In its official statement, the company cited regulatory uncertainty as the primary reason for the pullout, directly pointing to the EU’s Artificial Intelligence Act and the lack of binding guidelines in the UK.

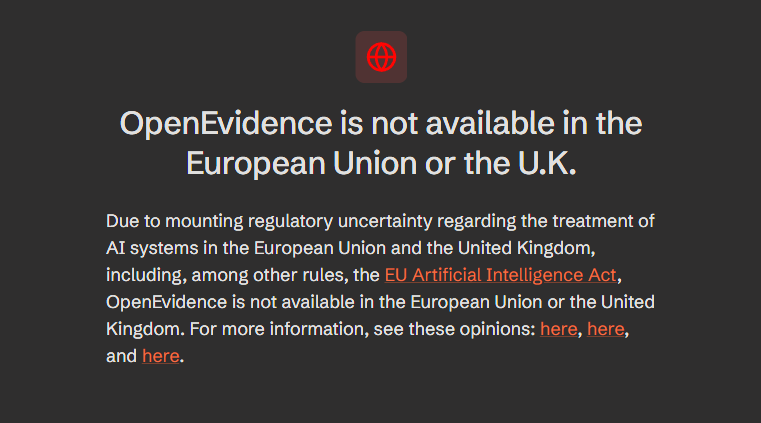

Visiting the OpenEvidence website, one can now only see the following message:

The news sparked a lively debate across the industry. Kimberly Breuer highlighted on her LinkedIn profile that European doctors have been cut off from a powerful, intelligent advisory tool. Experts point out that OpenEvidence’s retreat has nothing to do with monetization issues or startup runway. Industry outlet Distilled Post summarized the situation in its analysis:

“The decision to exit two major markets is not, therefore, a retreat by a company struggling for commercial traction. It reflects a calculated assessment that the legal risks of operating under the current European framework outweigh the near-term commercial opportunity”.

Brussels policymakers, however, have opted for radical protection of health and patient data. EU law classifies systems supporting medical diagnoses as “high-risk” tools. Before any such algorithm reaches EU hospitals, its creators must prove operational transparency, provide hard evidence of clinical validation, and establish precise liability rules in the event of an AI misdiagnosis.

Pushing back against criticism that they are stifling innovation, developers of European clinical systems argue that EU rules are not an insurmountable hurdle. A widely cited example in the debate is the Prof. Valmed platform, co-created by Professor Heinz Wiendl. It recently became the world’s first clinical assistant based on large language models (LLMs) to receive a Class IIb CE mark. This indicates strict compliance with the EU Medical Device Regulation (MDR) and full readiness for the incoming AI Act.

As the specialized portal Gesund.ai explains in its summary of the Valmed platform certification, the era of generic, experimental algorithms in medicine is finally coming to an end:

“Generic LLM benchmarks are now insufficient; regulators expect indication specific evidence linked to real world data sources” .

The strict approach of regulatory bodies is expected to yield long-term strategic benefits for Europe. Forcing developers to obtain rigorous medical certifications effectively weeds out algorithms prone to so-called “hallucinations” or those trained on unverified data. While the current regulatory maze is currently repelling Silicon Valley players, it simultaneously lays the groundwork for a highly self-contained internal market. As a result, doctors working in the European Union gain a legal guarantee that the technologies cleared for use are completely safe and backed by ironclad clinical evidence. Still, it is not out of the question that a compromise between American innovation and European requirements could be hammered out in the future.